Publications

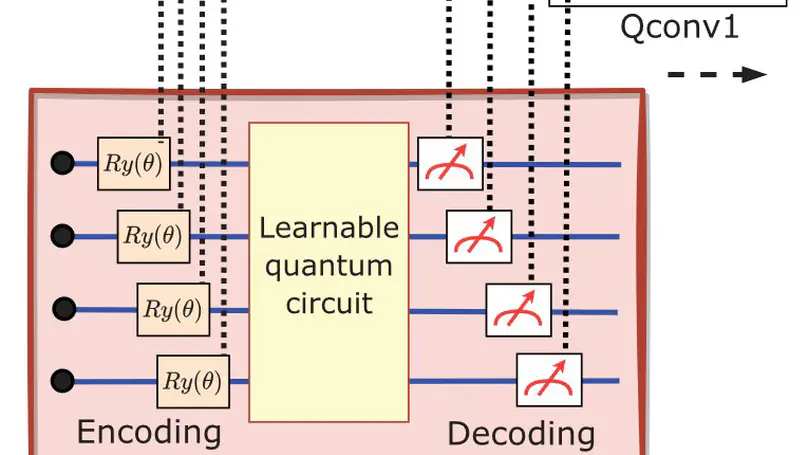

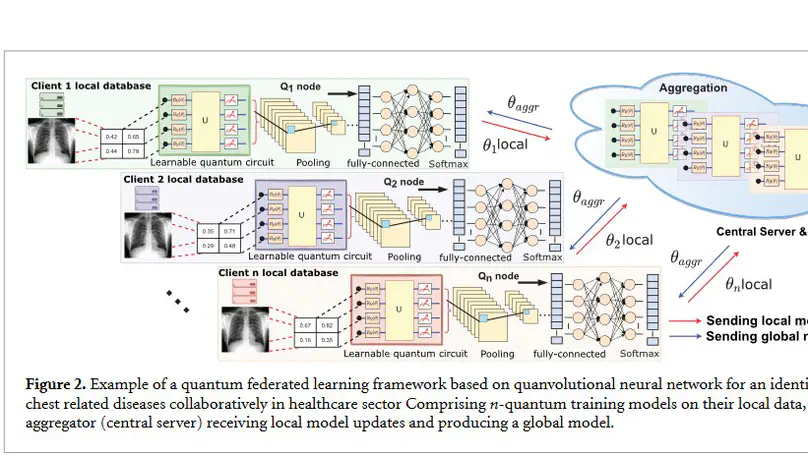

In recent years, the concept of federated machine learning has been actively driven by scientists to ease the privacy concerns of data owners. Currently, the combination of machine learning and quantum computing technologies is a hot industry topic and is positioned to be a major disruptor. It has become an effective new tool for reshaping several industries ranging from healthcare to finance. Data sharing poses a significant hurdle for large-scale machine learning in numerous industries. It is a natural goal to study the advanced quantum computing ecosystem, which will be comprised of heterogeneous federated resources. In this work, the problem of data governance and privacy is handled by developing a quantum federated learning approach, that can be efficiently executed on quantum hardware in the noisy intermediate-scale quantum era. We present the federated hybrid quantum–classical algorithm called a quanvolutional neural network with distributed training on different sites without exchanging data. The hybrid algorithm requires small quantum circuits to produce meaningful features for image classification tasks, which makes it ideal for near-term quantum computing. The primary goal of this work is to evaluate the potential benefits of hybrid quantum–classical and classical-quantum convolutional neural networks on non-independently and non-identically partitioned (Non-IID) and real-world data partitioned datasets among several healthcare institutions/clients. We investigated the performance of a collaborative quanvolutional neural network on two medical machine learning datasets, COVID-19 and MedNIST. Extensive experiments are carried out to validate the robustness and feasibility of the proposed quantum federated learning framework. Our findings demonstrate a decrease of 2%–39% times in necessary communication rounds compared to the federated stochastic gradient descent approach. The hybrid federated framework maintained a high classification testing accuracy and generalizability, even in scenarios where the medical data is unevenly distributed among clients.

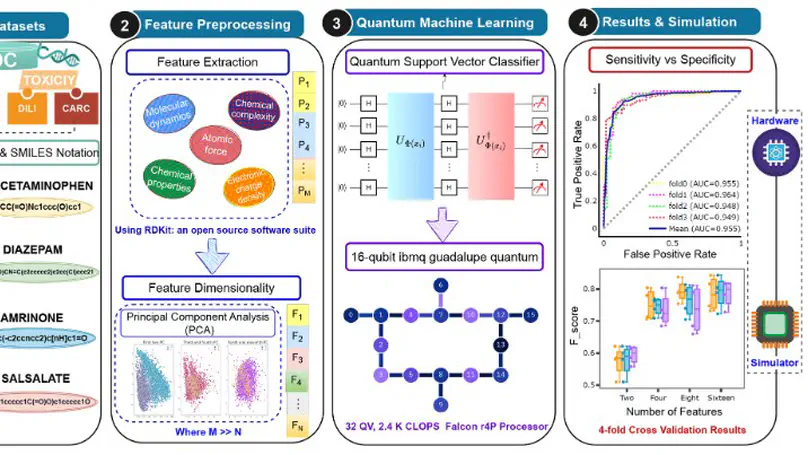

In the drug discovery paradigm, the evaluation of absorption, distribution, metabolism, and excretion (ADME) and toxicity properties of new chemical entities is one of the most critical issues, which is a time-consuming process, immensely expensive, and poses formidable challenges in pharmaceutical R&D. In recent years, emerging technologies like artificial intelligence (AI), big data, and cloud technologies have garnered great attention to predict the ADME and toxicity of molecules. Currently, the blend of quantum computation and machine learning has attracted considerable attention in almost every field ranging from chemistry to biomedicine and several engineering disciplines as well. Quantum computers have the potential to bring advances in high-throughput experimental techniques and in screening billions of molecules by reducing development costs and time associated with the drug discovery process. Motivated by the efficiency of quantum kernel methods, we proposed a quantum machine learning (QML) framework consisting of a classical support vector classifier algorithm with a kernel-based quantum classifier. To demonstrate the feasibility of the proposed QML framework, the simplified molecular input line entry system (SMILES) notation-based string kernel, combined with a quantum support vector classifier, is used for the evaluation of chemical/drug ADME-Tox properties. The proposed quantum machine learning framework is validated and assessed via large-scale simulations. Based on our results from numerical simulations, the quantum model achieved the best performance as compared to classical counterparts in terms of the area under the curve of the receiver operating characteristic curve (AUC ROC; 0.80–0.95) for predicting outcomes on ADME-Tox data sets for small molecules, with a different number of features. The deployment of the proposed framework in the pharmaceutical industry would be extremely valuable in making the best decisions possible.

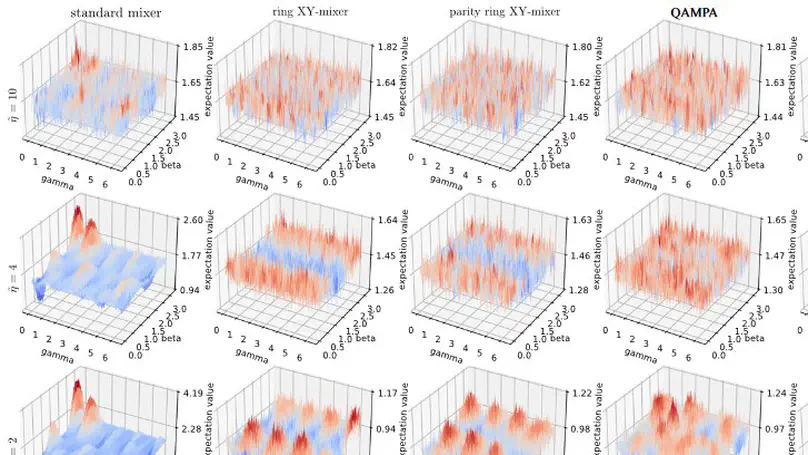

We present a detailed study of portfolio optimization using different versions of the quantum approximate optimization algorithm (QAOA). For a given list of assets, the portfolio optimization problem is formulated as quadratic binary optimization constrained on the number of assets contained in the portfolio. QAOA has been suggested as a possible candidate for solving this problem (and similar combinatorial optimization problems) more efficiently than classical computers in the case of a sufficiently large number of assets. However, the practical implementation of this algorithm requires a careful consideration of several technical issues, not all of which are discussed in the present literature. The present article intends to fill this gap and thereby provides the reader with a useful guide for applying QAOA to the portfolio optimization problem (and similar problems). In particular, we will discuss several possible choices of the variational form and of different classical algorithms for finding the corresponding optimized parameters. Viewing at the application of QAOA on error-prone NISQ hardware, we also analyse the influence of statistical sampling errors (due to a finite number of shots) and gate and readout errors (due to imperfect quantum hardware). Finally, we define a criterion for distinguishing between ‘easy’ and ‘hard’ instances of the portfolio optimization problem.

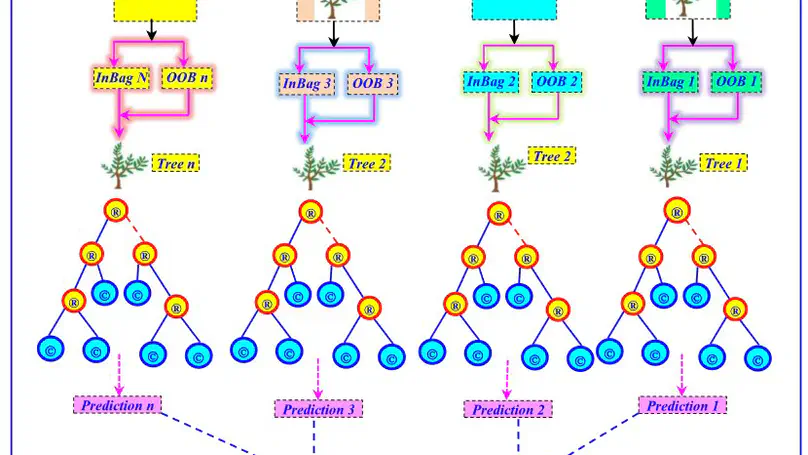

This study aims to evaluate the usefulness and effectiveness of four machine learning (ML) models for modelling cyanobacteria blue-green algae (CBGA) at two rivers located in the USA. The proposed modelling framework was based on establishing a link between five water quality variables and the concentration of CBGA. For this purpose, artificial neural network (ANN), extreme learning machine (ELM), random forest regression (RFR), and random vector functional link (RVFL) are developed. First, the four models were developed using only water quality variables. Second, based on the results of the first, a new modelling strategy was introduced based on preprocessing signal decomposition. Hence, the empirical mode decomposition (EMD), the variational mode decomposition (VMD), and the empirical wavelet transform (EWT) were used for decomposing the water quality variables into several subcomponents, and the obtained intrinsic mode functions (IMFs) and multiresolution analysis (MRA) components were used as new input variables for the ML models. Results of the present investigation show that (i) using single models, good predictive accuracy was obtained using the RFR model exhibiting an R and NSE values of ≈0.914 and ≈0.833 for the first station, and ≈0.944 and ≈0.884 for the second station, while the others models, i.e., ANN, RVFL, and ELM, have failed to provide a good estimation of the CBGA; (ii) the decomposition methods have contributed to a significant improvement of the individual models performances; (iii) among the thee decomposition methods, the EMD was found to be superior to the VMD and EWT; and (iv) the ANN and RFR were found to be more accurate compared to the ELM and RVFL models, exhibiting high numerical performances with R and NSE values of approximately ≈0.983, ≈0.967, and ≈0.989 and ≈0.976, respectively.

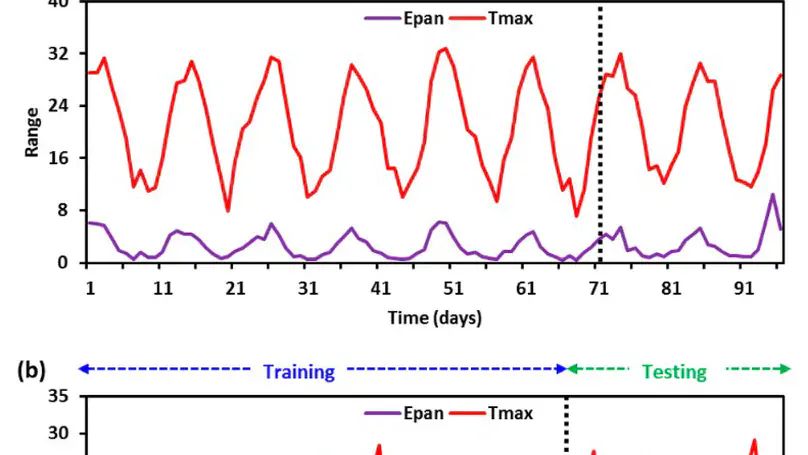

In the present study, two innovative techniques namely, Deep Learning (DL) and Gradient boosting Machine (GBM) models are developed based on a maximum air temperature ‘univariate modeling scheme’ for modeling the monthly pan evaporation (Epan) process. Monthly air temperature and pan evaporation are used to build the predictive models. These models are used for evaluating the evaporation prediction for the Kiashahr meteorological station located in the north of Iran and Ranichauri station positioned in Uttarakhand State of India. Findings indicated that the deep learning model was found best at Kiashahr station for testing datasets MAE (0.5691, mm/month), RMSE (0.7111, mm/month), NSE (0.7496), and IOA (0.9413). It can be concluded that in the semi-arid climate of Iran both of the used methods had the good capability in modeling of monthly Epan. However, DL predicted monthly Epan better than GBM. Moreover, the highest accuracy of the deep learning model was also observed for the Ranichauri station in terms of MAE = 0.3693 mm/month, RMSE = 0.4357 mm/month, NSE = 0.8344, & IOA = 0.9507 in testing stage. Overall, results expose the superior performance of DL-based models for both study stations and can also be utilized for various other environmental modeling.

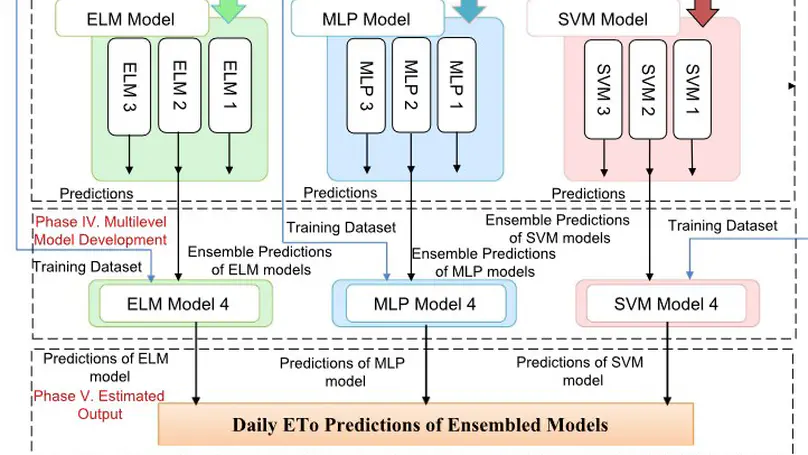

Due to climatic change, a variation in meteorological aspects influences the water requirement for crops, evapotranspiration, and water allocation of agro-meteorological and agriculture. Accurate estimation of Evapotranspiration has great importance to improve the utilization of water efficiently and irrigation scheduling. The main overarching goal of this paper is to investigate the abilities and applicability of three supervised machine learning models: Extreme Machine Learning , Multi-layer Perceptrons-Neural Network, Support Vector Machine to modeling the daily . Further, a three-layer multi-model ensemble machine learning approach is presented to predict evapotranspiration . The first layer consists of different statistical models to produce individual forecasts. The blending approach is employed to create an ensemble of the forecasts generated by the initial layer to produce probabilistic forecasts. In the second layer, three ensemble models are trained for prediction of by using the previous layer predictions and training data. In the third-layer, accuracy is estimated by tuning the parameters of second layer ensemble model. It has been analyzed that all statistical models showed effectiveness in high performance for modeling everyday (e.g. Nash-Sutchliffe efficiency (NSE)= 0.93-0.99, coefficient of determination (r ) = 0.93-0.99, Accuracy (ACC) = 80-99, Mean Square error (MSE) = 0.0103-0.1516). Particularity, the ensemble method with SVM achieved good accuracy (99.46% to 99.72%) to predict the daily and correlation coefficient is closed to 1 on training, validation, and testing datasets. Its root means square error (RMSE) (0.0085 to 0.0935) and Mean Absolute Error (MAE) (0.0614 to 0.0639) are minimum as compared to other ensemble machine learning models.

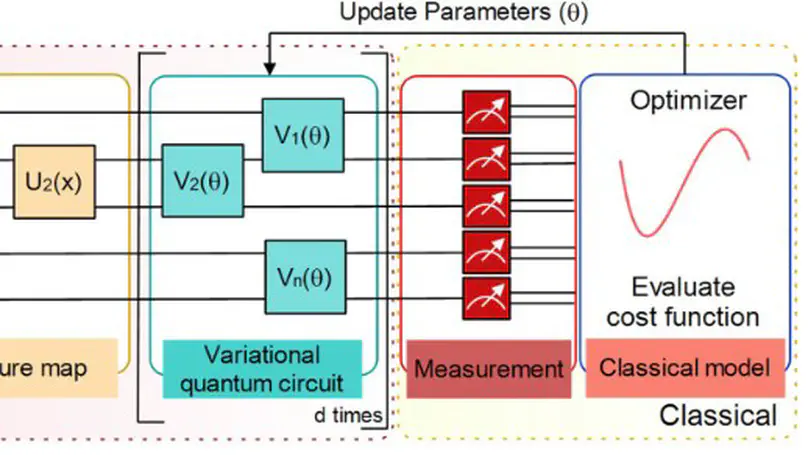

In quantum computing, the variational quantum algorithms (VQAs) are well suited for finding optimal combinations of things in specific applications ranging from chemistry all the way to finance. The training of VQAs with gradient descent optimization algorithm has shown a good convergence. At an early stage, the simulation of variational quantum circuits on noisy intermediate-scale quantum (NISQ) devices suffers from noisy outputs. Just like classical deep learning, it also suffers from vanishing gradient problems. It is a realistic goal to study the topology of loss landscape, to visualize the curvature information and trainability of these circuits in the existence of vanishing gradients. In this paper, we calculate the Hessian and visualize the loss landscape of variational quantum classifiers at different points in parameter space. The curvature information of variational quantum classifiers (VQC) is interpreted and the loss function’s convergence is shown. It helps us better understand the behavior of variational quantum circuits to tackle optimization problems efficiently. We investigated the variational quantum classifiers via Hessian on quantum computers, starting with a simple 4-bit parity problem to gain insight into the practical behavior of Hessian, then thoroughly analyzed the behavior of Hessian’s eigenvalues on training the variational quantum classifier for the Diabetes dataset. Finally, we show how the adaptive Hessian learning rate can influence the convergence while training the variational circuits.

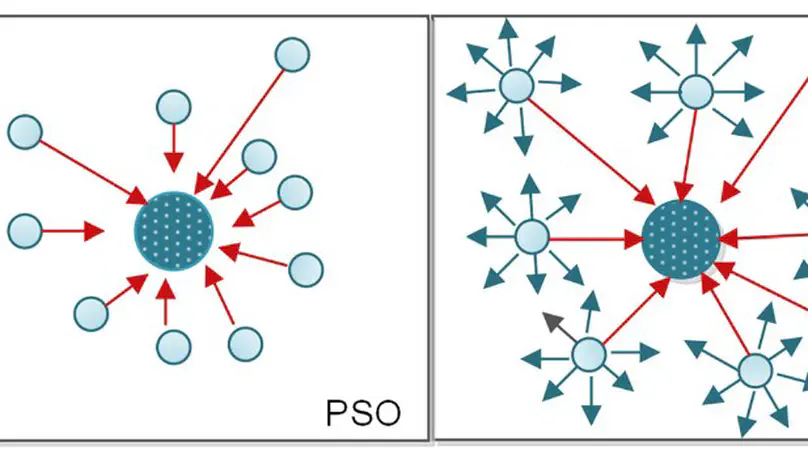

Motivated by the particle swarm optimization (PSO) and quantum computing theory, we have presented a quantum variant of PSO (QPSO) mutated with Cauchy operator and natural selection mechanism (QPSO-CD) from evolutionary computations. The performance of proposed hybrid quantum-behaved particle swarm optimization with Cauchy distribution (QPSO-CD) is investigated and compared with its counterparts based on a set of benchmark problems. Moreover, QPSO-CD is employed in well-studied constrained engineering problems to investigate its applicability. Further, the correctness and time complexity of QPSO-CD are analyzed and compared with the classical PSO. It has been proved that QPSO-CD handles such real-life problems efficiently and can attain superior solutions in most of the problems. The experimental results shown that QPSO associated with Cauchy distribution and natural selection strategy outperforms other variants in context of stability and convergence.

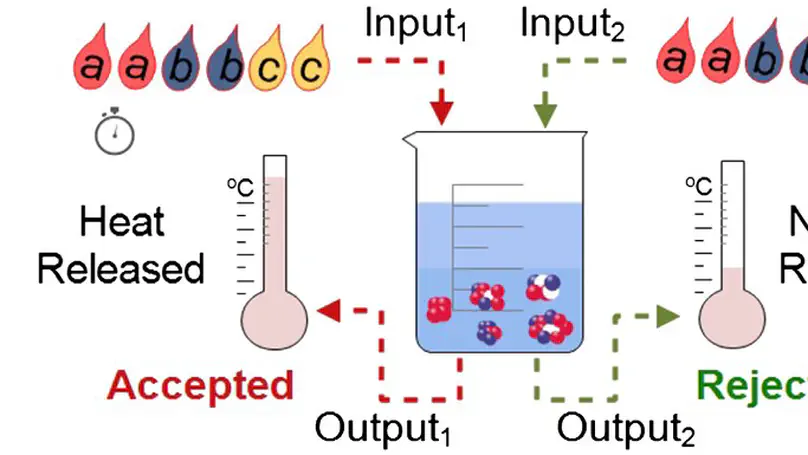

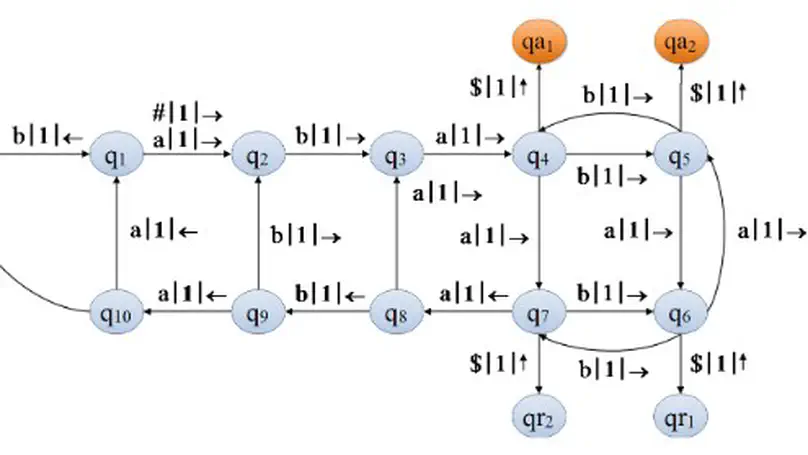

In recent years, the modeling interest has increased significantly from molecular level to atomic and quantum levels. Computational chemistry plays a significant role in designing computational models for the operation and simulation of systems ranging from atoms and molecules to industrial processes. It is influenced by a tremendous increase in computing power and the efficiency of algorithms. The representation of chemical reactions using classical automata theory in thermodynamic terms had a great influence on computer science. The study of chemical information processing with quantum computational models is a natural goal. In this study, we have modeled chemical reactions using two-way quantum finite automata, which are halted in linear time. Additionally, classical pushdown automata can be designed for such chemical reactions with multiple stacks. It has been proven that computational versatility can be increased by combining chemical accept/reject signatures and quantum automata models.

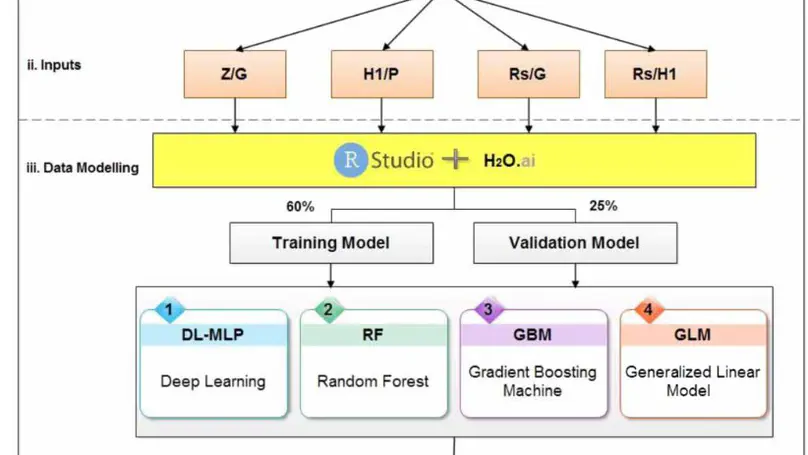

Gates in dams and irrigation canals have been used for the purpose of controlling discharge or water surface regulation. To compute the discharge under a gate, discharge coefficient (Cd) should be first determined precisely. From a novel point of view, this study investigates the effect of sill shape under the vertical sluice gate on Cd using four artificial intelligence methods, which are used to estimate Cd, (i) random forest (RF), (ii) deep learning (DL), (iii) gradient boosting machine (GBM), and (iv) generalized linear model (GLM). A sluice gate along with twelve different forms of sills was fabricated and tested in the University of Tabriz, Iran. Different flow rates were considered in the hydraulic laboratory with four gate openings. As a result, a total of 180 runs could be tested. The results showed that the installation of sill under the vertical gate has a positive effect on flow discharge. Sill shapes can be characterized by their hydraulic radius (Rs). Sensitivity analysis among the dimensionless parameters proved that Rs/G (the ratio of the hydraulic radius of the sills with respect to the gate opening) has a significant role in the determination of Cd. A semi-circular sill shape has a more positive effect on the increase of Cd than the other shapes.

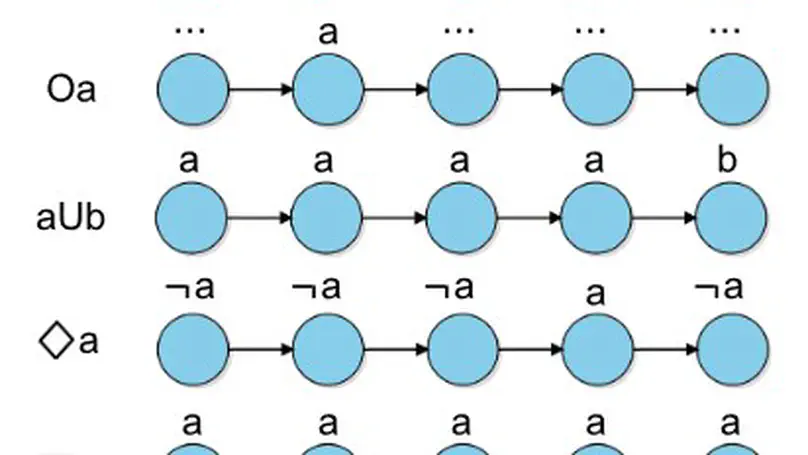

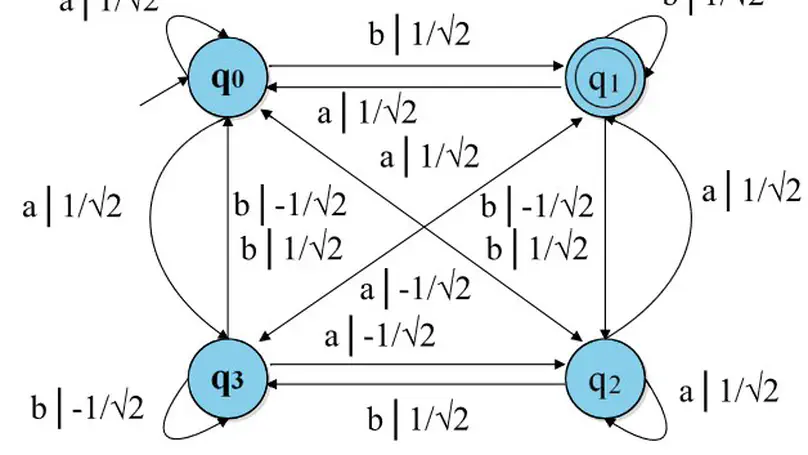

Linear temporal logic is a widely used method for verification of model checking and expressing the system specifications. The relationship between theory of automata and logic had a great influence in the computer science. Investigation of the relationship between quantum finite automata and linear temporal logic is a natural goal. In this paper, we present a construction of quantum finite automata on finite words from linear-time temporal logic formulas. Further, the relation between quantum finite automata and linear temporal logic is explored in terms of language recognition and acceptance probability. We have shown that the class of languages accepted by quantum finite automata are definable in linear temporal logic, except for measure-once one-way quantum finite automata.

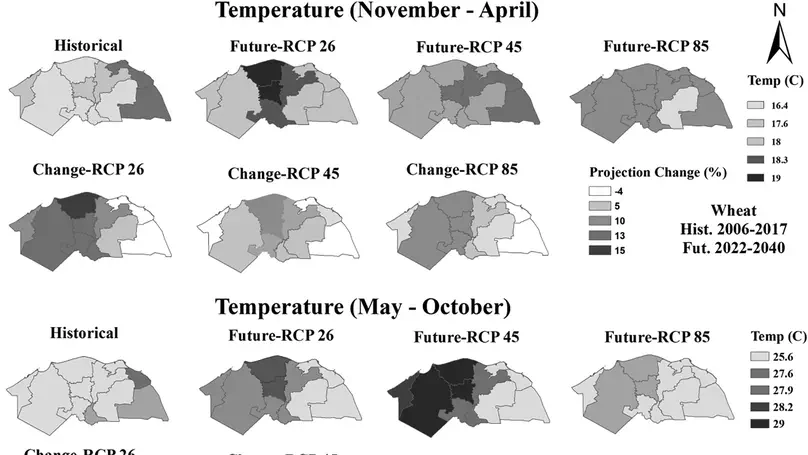

Spatial-temporal information of different water resources is essential to rationally manage, sustainably develop, and optimally utilize water. This study focused on simulating future water footprint (WF) of two agronomically important crops (i.e., wheat and maize) using deep neural networks (DNN) method in Nile delta. DNN model was calibrated and validated by using 2006–2014 and 2015–2017 datasets. Moreover, future data (2022–2040) were obtained from three Representative Concentration Pathways (RCP) 2.6, 4.5, and 8.5, and incorporated into DNN prediction set. The findings showed that determination-coefficient between historical-predicted crop evapotranspiration (ETc) varied from 0.92 to 0.97 for two crops. The yield prediction values of wheat-maize deviated within the ranges of −3.21% to 3.47% and −4.93% to 5.88%, respectively. Based on the ensemble of RCP, precipitation was forecasted to decease by 667.40% and 261.73% in winter and summer in western as compared to eastern, respectively, which will ultimately be dropped to 105.02% and 60.87%, respectively parallel to historical. Therefore, the substantial fluctuations in precipitation caused an obvious decrease in green WF of wheat (i.e., 24.96% and 37.44%) in western and eastern, respectively. Additionally, for maize, it induced a 103.93% decrease in western and an 8.96% increase in eastern. Furthermore, increasing ETc by 8.46% and 12.45% gave rise to substantially increasing (i.e., 8.96% and 17.21%) in western for wheat-maize compared to the east, respectively. Likewise, grey wheat-maize WF findings reveals that there was an increase of 3.07% and 5.02% in western as compared to −14.51% and 12.37% in eastern. Hence, our results highly recommend the optimal use of the eastern delta to save blue-water by 16.58% and 40.25% of total requirements for wheat-maize in contrast to others. Overall, the current research framework and results derived from the adopted methodology will help in optimal planning of future water under climate change in the agricultural sector.

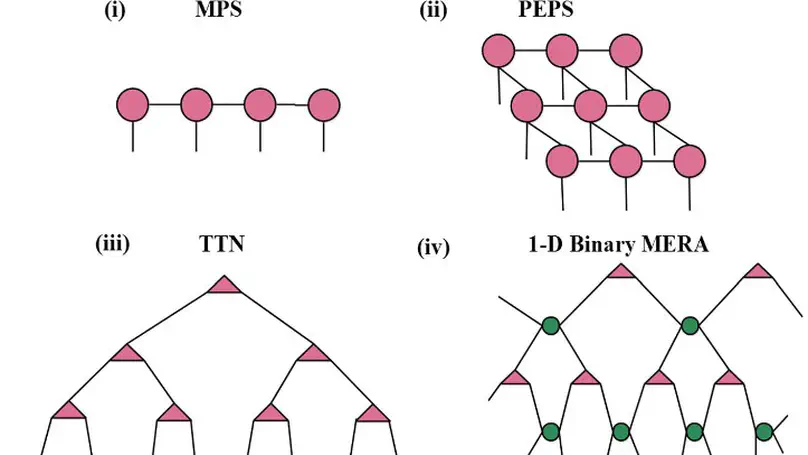

Interest in quantum computing has increased significantly. Tensor network theory has become increasingly popular and widely used to simulate strongly entangled correlated systems. Matrix product state (MPS) is a well-designed class of tensor network states that plays an important role in processing quantum information. In this letter, we show that MPS, as a one-dimensional array of tensors, can be used to classify classical and quantum data. We have performed binary classification of the classical machine learning data set Iris encoded in a quantum state. We have also investigated its performance by considering different parameters on the ibmqx4 quantum computer and proved that MPS circuits can be used to attain better accuracy. Furthermore the learning ability of an MPS quantum classifier is tested to classify evapotranspiration (ETo) for the Patiala meteorological station located in northern Punjab (India), using three years of a historical data set (Agri). We have used different performance metrics of classification to measure its capability. Finally, the results are plotted and the degree of correspondence among values of each sample is shown.

Inspired by the results of finite automata working on infinite words, we studied the quantum ω-automata with Büchi, Muller, Rabin and Streett acceptance condition. Quantum finite automata play a pivotal part in quantum information and computational theory. Investigation of the power of quantum finite automata over infinite words is a natural goal. We have investigated the classes of quantum ω-automata from two aspects: the language recognition and their closure properties. It has been shown that quantum Muller automaton is more dominant than quantum Büchi automaton. Furthermore, we have demonstrated the languages recognized by one-way quantum finite automata with different quantum acceptance conditions. Finally, we have proved the closure properties of quantum ω-automata.

This paper introduces a variant of two-way quantum finite automata named two-way multihead quantum finite automata. A two-way quantum finite automaton is more powerful than classical two-way finite automata. However, the generalizations of two-way quantum finite automata have not been defined so far as compared to one-way quantum finite automata model. We have investigated the newly introduced automata from two aspects: the language recognition capability and its comparison with classical and quantum counterparts. It has been proved that a language which cannot be recognized by any one-way and multi-letter quantum finite automata can be recognized by two-way quantum finite automata. Further, it has been shown that a language which cannot be recognized by two-way quantum finite automata can be recognized by two-way multihead quantum finite automata with two heads. Furthermore, it has been investigated that quantum variant of two-way deterministic multihead finite automata takes less number of heads to recognize a language containing of all words whose length is a prime number.

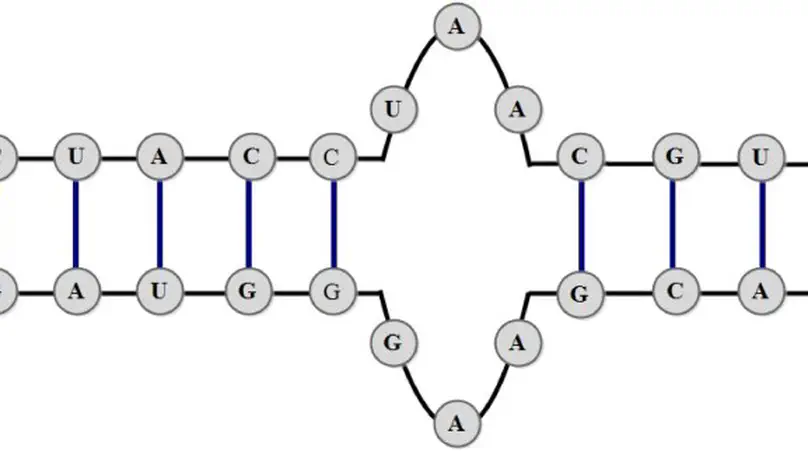

Quantum finite automata (QFA) play a crucial role in quantum information processing theory. The representation of ribonucleic acid (RNA) and deoxyribonucleic acid (DNA) structures using theory of automata had a great influence in the computer science. Investigation of the relationship between QFA and RNA structures is a natural goal. Two-way quantum finite automata (2QFA) is more dominant than its classical model in language recognition. Motivated by the concept of quantum finite automata, we have modeled RNA secondary structure loops such as, internal loop and double helix loop using two-way quantum finite automata. The major benefit of this approach is that these sequences can be parsed in linear time. In contrast, two-way deterministic finite automata (2DFA) cannot represent RNA secondary structure loops and two-way probabilistic finite automata (2PFA) can parse these sequences in exponential time. To the best of authors knowledge this is the first attempt to represent RNA secondary structure loops using two-way quantum finite automata.

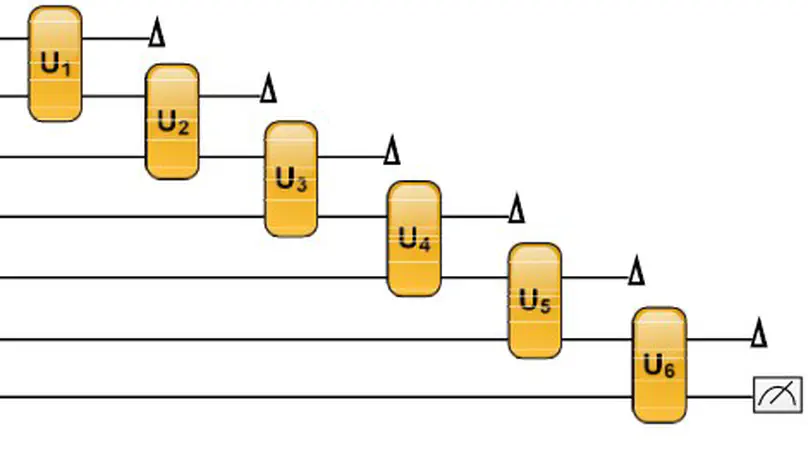

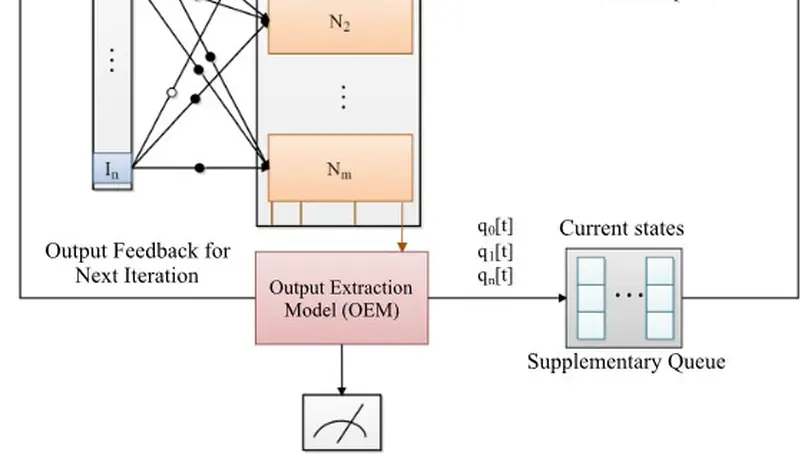

During the last three decades, quantum neural computation has received a relatively high amount of attention among researchers and academic communities since the model of quantum neural network has been proposed. Matrix product state is the well-designed class of tensor network states, which plays an important role in processing of quantum information. The area of dynamical systems help us to study the temporal behavior of systems in time. In our previous work, we have shown the relationship between quantum finite state machine and matrix product state. In this paper, we have used the proposed unitary criteria to investigate the dynamics of matrix product state with quantum weightless neural networks, where the output qubit is extracted and fed back (iterated) to input. Further, we have used Von Neumann entropy to measure possible entanglement of output quantum state. Finally, we have plotted the dynamics for each matrix product state against iterations and analyzed their results.

Motivated by the concept of quantum finite-state machines, we have investigated their relation with matrix product state of quantum spin systems. Matrix product states play a crucial role in the context of quantum information processing and are considered as a valuable asset for quantum information and communication purpose. It is an effective way to represent states of entangled systems. In this paper, we have designed quantum finite-state machines of one-dimensional matrix product state representations for quantum spin systems.

We present the federated hybrid quantum–classical algorithm called a quanvolutional neural network with distributed training on different sites without exchanging data. The hybrid algorithm requires small quantum circuits to produce meaningful features for image classification tasks, which makes it ideal for near-term quantum computing. The primary goal of this work is to evaluate the potential benefits of hybrid quantum–classical and classical-quantum convolutional neural networks on non-independently and non-identically partitioned (Non-IID) and real-world data partitioned datasets among several healthcare institutions/clients.